What Happen

Product Design & UX Design | Collaborator: Shuqi Chen Jialiang Li Jiiafeng Lin

OVERVIEW

“What happen?” is a LBS activity publishing and prompting application. Specifically, it can automatically get the user's location. When a user goes to somewhere and some activities are going happen soon, "What happen" will send notifications to the user. Moreover, it is also a activity publisher. Associations and organizations in SYSU could push their activities here.

PROBLEM SPACE

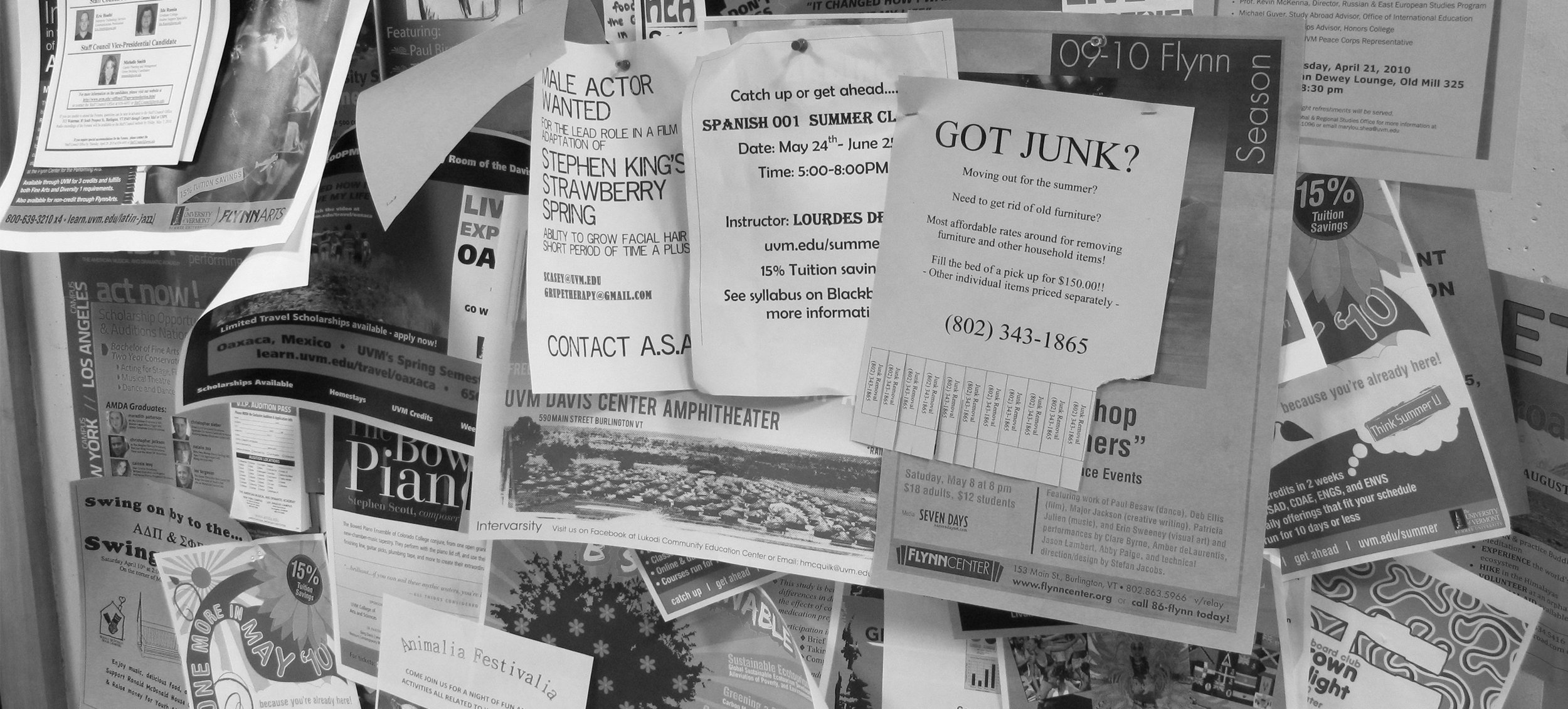

You might be familiar with this scenario, you take a glance at the bullet board in your school before class and find an exciting animal festival will be on this week. When the class is over, you wanna get the more details of this event. It takes you 30 minutes to find the poster you just saw because it has been covered dozens of other posters in the last 40 minutes! Yes this happens to us. I usually have to take out my notebook and write down the info once I see something I'm interested in, or I will miss it, too. We believe this problem should be tackle and we analyzed the problem, identified unmet needs and defined touch points, opportunities and constraints for our design.

VISION

By the end of the analysis of problem space, we had already thrown a few assumptions out of the window and we had enough information to start drawing out some ideas. We identified a few goals for our APP:

- Automatically connecting the events and users

- Giving information of what is gonna happen at the user's current place

- Sending notifications of events marked as interested

IDEATION

After several rounds of brainstorming, we settled on the ideas of using location as an intersection point of students and events. When you find something is happening or will take place where you are standing, you'll be more likely to take part in. Also, GPS is included in every smartphone, so it is straightforward and convenient to learn the event information by location.

We decided to divide the process into two parts - events happening now and events in the future. Here's the basic storyboard:

LO-FI PROTOTYPING

After deciding on the LBS idea and identified the basic storyboard, we started to produce low-fidelity scenario-driven wireframes on paper. This process allowed us to give form to the big idea and discover potential problems along the way.

HI-FI PROTOTYPING

We spent a long time on the high fidelity prototype. This graph shows the main interaction screens of our APP: